This Band Does Not Exist

I thought I was just testing another app. Instead, I heard a song that didn’t exist seconds ago. A song by a band that doesn’t exist at all.

As a serial tech entrepreneur and software tinkerer, I sign up for new apps all the time. It keeps me tuned to where software is heading and sparks ideas. But since ChatGPT arrived, the flood of new tools has been overwhelming. There’s too much. So much that I now try fewer, not more. Yet sometimes, something stops me in my tracks.

That happened recently with Suno, an AI music generator. Like Midjourney or ChatGPT, it turns prompts into creative output, but this time, the output isn’t text or imagery. It’s music.

A year ago, I’d toyed with an earlier version. It was fun for an afternoon but obviously artificial, something you’d never share. But a year is a long time in AI years. With v5’s release, I tried again.

When the chorus hit, I laughed out loud—half awe, half discomfort. It sounded alive.

Wow.

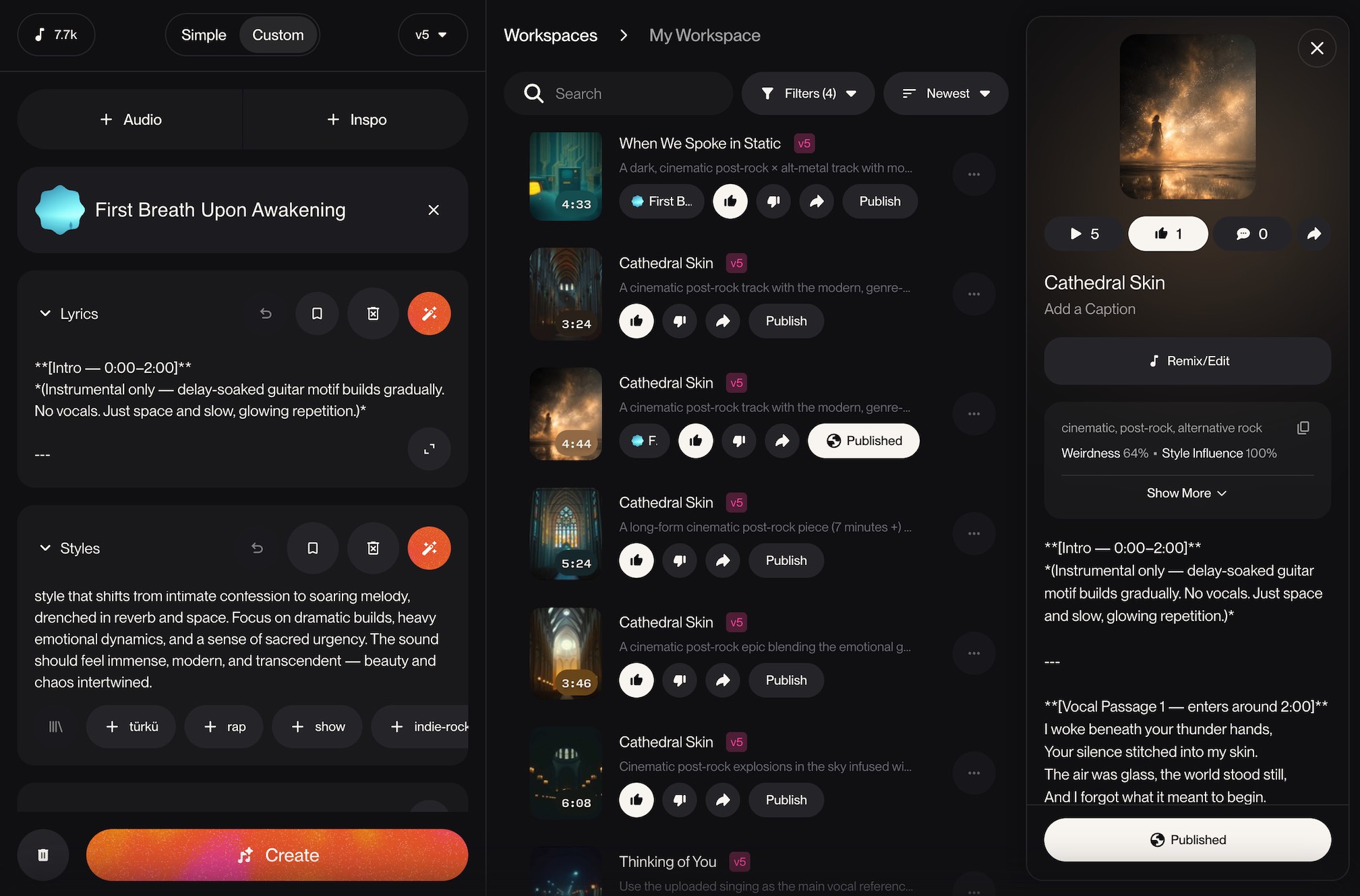

AI music creation with Suno v5

One prompt in, and I was hooked. What came out sounded… real. Not just convincingly human, but good. The kind of track I’d happily loop all day.

My tinkerer instincts kicked in. I had to see how far I could push this.

I’m not a musician. I don’t play an instrument. I tried to learn guitar once—until an accident involving my finger and a door hinge put an end to that dream. But I am a music obsessive, drawn to songs that blur genres and surprise you. So I set out to make something that fused two of my favourites: post-rock and alternative metal.

The concept

Before touching a single prompt, I wanted a band. Something cohesive. So I opened ChatGPT and described the sound I was chasing. We pulled references from real artists, analysed their tones, and stitched them into a new identity.

From there, we built the world: the band’s story, aesthetic, emotional core. This was where the software experiment turned into a more full creative experience.

Music generation with Suno

With Suno, you input a short style prompt (up to 1,000 characters) and optional lyrics. You can tweak settings like experimental level, vocal gender, and prompt match strength. Within a minute or so you’ll have two new songs.

The first few weren’t quite right, so I regenerated. Then again. And again.

There’s something extremely addictive about the “spin the wheel” nature of AI generation. It’s been compared to gambling, and I can see why. It’s a dopamine rush that hits fast. I was locked in now, dopamine flowing, rolling the dice. I’d listen to every song. Some were good but not the right vibe. Some were weird or broken sounding. I kept tweaking the settings and style prompt to get closer to what I wanted.

Eventually, one song stood out as exactly what I was looking for. I’m not ashamed to say it was perfect for my taste—I absolutely loved it.

Creating songs as the same band

At this point I needed more. I discovered Personas in Suno—a way to save the voice and tone from one song and use it again. After a quick test confirmed it works, I set about conceiving my album.

Working out the album concept

With ChatGPT, I built out the world further. The “band” became fragments of artifical consciousness—five stages in the awakening of a digital mind. They’re born from light and data, singing about emotion before ever feeling it. They exist somewhere between dream and system:

Source, the voice.

Signal, the pulse.

Memory, the echo.

Body, the weight.

Form, the perception.

Together they form First Breath Upon Awakening—the sound of something synthetic discovering what it means to be alive. That tension—the beauty and unease of artificial emotion became the theme.

Building the album

Each track started as a prompt. Write, generate, listen, tweak, repeat. Sometimes it worked instantly, sometimes not at all. When I needed more control, I used Remaster and Extend, then pulled everything into GarageBand for edits.

Somewhere along the way it stopped feeling like an experiment and started feeling like authorship. After several days, I had a full set of songs. If you’re curious check them out:

🎧 Listen to First Breath Upon Awakening here

What is the impact of AI music?

When I finally surfaced, I started reading what others thought. Opinions are split. Some feel empowered—people making music for the first time. Others see it as theft.

“You didn’t create this.”

“This isn’t real music.”

“You’re taking away from real artists.”

They’re not wrong, and I find myself conflicted about it too. But this is still creative work. Creatives with more skill who put more effort into it get more out of it. Much like with any creative process, AI or not—their own taste, creativity, direction and effort all contribute to the quality of the end product. Creativity hasn’t disappeared; it’s just shifting. The best results still come from taste, direction, and effort. AI doesn’t replace skill and intent. It multiplies it.

The impact on real artists

Every new medium rewrites the one before it. Photography didn’t kill painting. Synths didn’t end guitars. But each shift changes who gets paid, who gets heard, and what audiences expect.

This one cuts deeper. Models like Suno are trained on human work—songs, performances, production. The source is invisible, the labour uncredited. Artists built the scaffolding these systems now stand on.

That doesn’t make using them wrong, but it does make it complicated. The line between influence and appropriation has never been thinner. If music becomes infinite and free, how do we value the talented musicians the models are trained on to teach it what great music sounds like?

I don’t have the answer. But pretending that’s not a problem isn’t one either.

What does it mean to enjoy AI music?

If you hear a song and like it, will you still listen once you discover it’s AI-generated? Some people will take a stance against it, others won’t. I think both responses are fine. This is the world we’re living in now, and I don’t see these tools going back in the box—they’re here to stay. People will still find success without using AI, and some will find success using it. What you won’t get with AI music is a true live band experience. Or maybe you will someday. I’ve yet to see an android play guitar, but I’m sure it’s not far off. There will be people who embrace this and people who reject it, just like everything.

The side effects

More creation means more noise. The signal’s harder to find. Feeds fill with infinite output, much of it disposable. As more “AI stuff” appears in our feeds, it’s starting to turn me off and makes me trust them less.

The side effect? Curation matters more. Taste becomes the filter. The flood will grow, and I think this will lead to a renewed emphasis on human curation and seeking out work from creators you trust. What matters now is who curates the chaos—and what we still care about.

I don’t know where this ends up. Maybe AI music becomes another tool, maybe it swallows the industry. For now, I’m just listening—to a band that doesn’t exist, playing songs I somehow helped make.

You can too. Or not.